why engineers choose linux

Machine learning engineers are moving to Linux because it handles long training jobs without the stability issues found in consumer operating systems. It also cuts costs. When you're scaling up GPU clusters, avoiding licensing fees for every node is a practical necessity rather than a luxury.

The command-line interface is also a huge benefit. While graphical interfaces have their place, the command line provides a level of control and automation that’s simply unmatched. Combine that with the incredibly powerful package management systems, and you have an environment that's both flexible and efficient. And, of course, the community support is phenomenal; you’re rarely stuck on a problem for long when there's a vast network of experienced users ready to help.

The hardware landscape further reinforces this trend. Linux is the dominant operating system for servers, which are the workhorses of AI training and inference. It’s also heavily favored in embedded systems, where AI is increasingly being deployed at the edge. Digi International’s X-LINUX-AI framework, built for ConnectCore MP25 modules, is a good example of this trend toward embedded AI solutions. It shows how Linux is becoming integral to bringing AI capabilities to devices beyond the cloud.

Frankly, if you're serious about AI development, understanding Linux is becoming less of an option and more of a necessity. It’s not just about running existing models; it’s about having the freedom to customize, optimize, and innovate.

file management for datasets

Machine learning projects live and die on data, and managing that data effectively is paramount. You'll spend a lot of time moving, copying, and organizing files, so getting comfortable with the basic Linux file management commands is essential. The `cp` command is your friend for creating copies of files. For example, `cp data.csv data_backup.csv` will duplicate your data file. Then there's `mv`, which can both move and rename files. `mv old_name.txt new_name.txt` renames a file, while `mv file.txt /path/to/new/location/` moves it to a different directory.

Be careful with `rm`, the remove command. It permanently deletes files, and there's no undo! Always double-check what you're deleting. A safer approach is often to move files to a temporary 'trash' directory first. The `find` command is incredibly powerful for locating files. `find . -name '*.csv'` will find all CSV files in the current directory and its subdirectories. You can combine `find` with other commands to perform actions on the found files.

A well-organized directory structure is crucial for larger projects. I recommend a structure that separates raw data, preprocessed data, models, and scripts. For instance: `project_name/data/raw`, `project_name/data/processed`, `project_name/models`, `project_name/scripts`. Consistent naming conventions are also important. Use descriptive names that clearly indicate the content and version of each file. Something like `data_v1.csv` or `model_trained_20240101.pth` is much better than just `data.csv`.

Consider the types of data you're working with. For images, a directory structure based on categories can be useful. For text data, you might want to separate training, validation, and test sets into different directories. Using clear and consistent organization will save you countless headaches down the line.

Managing Large Dataset Files with Find and Copy Operations

Machine learning engineers frequently work with large datasets that require careful file management. When dealing with substantial CSV files containing training data, it's essential to have reliable backup and organization strategies. The find command combined with file operations provides powerful capabilities for locating and managing these critical data files.

# Find all CSV files larger than 1GB in the datasets directory

find /home/mluser/datasets -name "*.csv" -size +1G -type f

# Create backup directory if it doesn't exist

mkdir -p /backup/large_datasets

# Find and copy large CSV files to backup location

find /home/mluser/datasets -name "*.csv" -size +1G -type f -exec cp {} /backup/large_datasets/ \;

# Alternative: Find large CSV files and copy with progress indication

find /home/mluser/datasets -name "*.csv" -size +1G -type f | while read file; do

echo "Copying: $file"

cp "$file" /backup/large_datasets/

done

# Verify copied files and their sizes

ls -lh /backup/large_datasets/*.csvThis approach allows you to efficiently locate large dataset files and create backups before processing. The first command identifies all CSV files exceeding 1GB, while the subsequent commands demonstrate different methods for copying these files to a backup location. The while loop version provides visual feedback during the copy process, which is particularly useful when dealing with multiple large files that may take time to transfer.

handling large model files

Machine learning models, especially deep learning models, can be enormous. Dealing with these large files requires efficient compression and archiving tools. The `tar` command is used to create archive files, essentially bundling multiple files into a single file. It doesn't compress the files by default, though. That's where `gzip` and `bzip2` come in. `gzip` provides good compression and speed, while `bzip2` offers higher compression ratios but is slower.

You can combine `tar` with compression utilities to create compressed archives. `tar -czvf archive.tar.gz directory_to_archive` creates a gzipped tar archive. Let’s break that down: `c` creates the archive, `z` uses gzip compression, `v` is verbose (shows the files being added), and `f` specifies the archive filename. Similarly, `tar -cjvf archive.tar.bz2 directory_to_archive` uses bzip2 compression. Choosing between `gzip` and `bzip2` depends on your priorities – speed versus space savings.

To extract files from an archive, use `tar -xzvf archive.tar.gz` for gzipped archives and `tar -xjvf archive.tar.bz2` for bzip2 archives. The `x` option tells tar to extract. It’s also good practice to verify the integrity of archives after extraction, especially if they've been transferred over a network. You can use checksums (like MD5 or SHA256) to ensure the files haven't been corrupted.

While `.tar.gz` and `.tar.bz2` are common, you might also encounter `.zip` archives. The `unzip archive.zip` command extracts `.zip` files. Don’t underestimate the value of good compression; it can save significant storage space and bandwidth, especially when working with large datasets or deploying models.

Process Management: Training and Inference

Training machine learning models and running inference servers can be resource-intensive and time-consuming. Effectively managing these processes is crucial. `top` and `htop` are your go-to tools for monitoring system resource usage. `htop` is a more visually appealing and interactive version of `top`. They show you which processes are consuming the most CPU and memory.

The `ps` command provides a snapshot of running processes. `ps aux` lists all processes, showing the user, process ID (PID), CPU usage, memory usage, and the command being executed. You’ll need the PID to terminate a process. The `kill` command sends a signal to a process, usually to terminate it. `kill ` sends the default termination signal. Be careful with `kill -9 `, as it forcefully terminates the process and can lead to data loss.

`nohup` is incredibly useful for running processes in the background, even after you log out. `nohup python train.py > output.log 2>&1 &` runs `train.py` in the background, redirects standard output and standard error to `output.log`, and continues running even if you disconnect from the server. The `&` symbol puts the process in the background.

For longer-running tasks, consider using `screen` or `tmux`. These are terminal multiplexers that allow you to create persistent terminal sessions. You can detach from a session and reattach later, even from a different location. This is invaluable for training jobs that might take days or weeks to complete. These tools give you a safety net if your connection drops – the training won’t be interrupted.

Process & Session Management Tools for AI Development

| Tool | Real-time Monitoring | Process Filtering | Session Persistence | Ease of Use |

|---|---|---|---|---|

| top | Excellent, provides a dynamic real-time view of system processes. | Basic, allows sorting by CPU usage, memory, and process ID. | None, processes are not saved or restored. | Good, standard tool, widely available but can be overwhelming for new users. |

| htop | Excellent, enhanced real-time view with color-coded output and visual process trees. | Advanced, offers flexible filtering and searching of processes. | None, like 'top', it doesn’t inherently persist sessions. | Very Good, more interactive and user-friendly than 'top'. |

| ps | Static snapshot, displays a list of processes at a single point in time. | Powerful, supports complex filtering using various options and signals. | None, provides process information but doesn't maintain sessions. | Moderate, requires understanding of command-line options for effective use. |

| screen | Limited, primarily focused on session management, not detailed real-time monitoring. | Basic, allows process identification within a session. | Excellent, allows detaching and reattaching to sessions, preserving processes. | Moderate, requires learning a specific set of commands for session control. |

| tmux | Limited, similar to 'screen', session management is its strength. | Basic, process identification within a session. | Excellent, provides robust session management with features like splitting windows and panes. | Very Good, highly configurable and offers a more modern interface than 'screen'. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Package Management: Your AI Toolkit

Linux package managers are essential for installing and managing the software you need for AI development. The specific package manager depends on your distribution. Debian and Ubuntu use `apt`, CentOS and RHEL use `yum` (or `dnf` in newer versions), and Arch Linux uses `pacman`. The basic commands are similar across distributions: `apt install `, `yum install `, `pacman -S `.

For AI/ML, you’ll likely need Python, TensorFlow, PyTorch, scikit-learn, and potentially CUDA if you're using NVIDIA GPUs. Installing these packages directly can sometimes lead to dependency conflicts. That's where virtual environments come in. `venv` (built-in to Python) and `conda` (from Anaconda) allow you to create isolated environments for each project, ensuring that dependencies don't clash.

To create a virtual environment with `venv`, use `python3 -m venv myenv`. Activate it with `source myenv/bin/activate`. Then, you can install packages using `pip`: `pip install tensorflow`. Conda provides similar functionality: `conda create -n myenv python=3.9` creates an environment, and `conda activate myenv` activates it. After activation, use `conda install tensorflow`.

Keeping your packages up-to-date is also important. Use `apt update && apt upgrade`, `yum update`, or `pacman -Syu` to update your system. Regularly updating your packages ensures you have the latest security patches and bug fixes. It’s a good habit to get into, even if you’re using virtual environments.

- Python is the primary language for most model development.

- TensorFlow: A popular deep learning framework.

- PyTorch: Another widely used deep learning framework.

- scikit-learn: A versatile library for traditional machine learning algorithms.

- CUDA provides the parallel computing platform needed for NVIDIA GPU acceleration.

Remote Access and Collaboration

Often, you'll be developing on a remote server, either because it has more powerful hardware or because it's part of a cluster. SSH (Secure Shell) is the standard protocol for secure remote access. You connect to a server using `ssh username@server_address`. For added security, use key-based authentication instead of passwords. This involves generating a key pair and copying the public key to the server.

`scp` (Secure Copy) and `rsync` are used for transferring files securely between your local machine and the server. `scp file.txt username@server_address:/path/to/destination/` copies a file to the server. `rsync` is more efficient for synchronizing directories, as it only transfers the changed files. `rsync -avz /local/directory username@server_address:/remote/directory` synchronizes a directory.

Collaboration is key in most AI projects. Git is the standard version control system. You can use Git to track changes to your code, collaborate with others, and revert to previous versions if needed. Platforms like GitHub, GitLab, and Bitbucket provide hosting for Git repositories. Integrating these tools with your Linux environment allows for seamless collaboration.

Cloud-based Linux instances, like those offered by AWS, Azure, and Google Cloud Platform, are increasingly popular for AI development. These instances provide access to powerful hardware and scalable resources. Managing these cloud resources from the command line is becoming increasingly important, and tools are emerging to streamline this process.

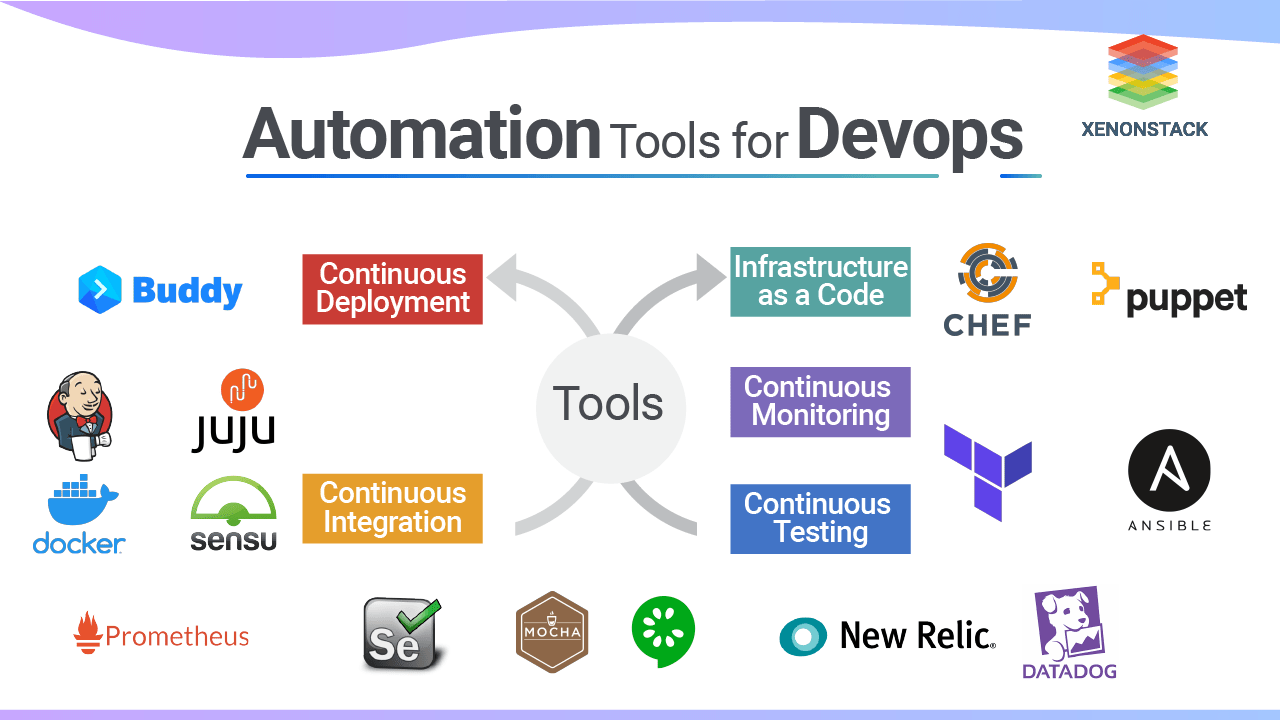

Linux Shell Scripting for Automation

Shell scripting is a powerful way to automate repetitive tasks in your AI/ML workflow. It allows you to combine multiple commands into a single script, making your work more efficient. Start by understanding variables, loops, and conditional statements. Variables store data, loops repeat a block of code, and conditional statements execute code based on certain conditions.

For example, a simple script to preprocess data might look like this: `#!/bin/bash for file in *.csv; do python preprocess.py $file > processed_$file done`. This script iterates through all CSV files in the current directory and applies the `preprocess.py` script to each one. Error handling is crucial. Use `if` statements to check for errors and exit the script gracefully. Logging is also important for debugging and monitoring.

Functions allow you to organize your code into reusable blocks. You can define a function like this: `my_function() { echo "This is my function" }`. Then, you can call it by simply typing `my_function`. Don’t forget to make your scripts executable using `chmod +x script_name.sh`. This gives the script execute permissions.

Consider automating tasks like data downloading, model training, evaluation, and deployment. Shell scripts can be integrated into your CI/CD pipeline to automate the entire AI/ML lifecycle. Mastering shell scripting will significantly improve your productivity and streamline your workflow.

Automated ML Workflow Script

Modern machine learning workflows on Linux benefit from automation scripts that handle the entire pipeline from data acquisition to model deployment. The following script demonstrates essential Linux commands and file operations commonly used in ML projects, including directory management, data downloading, preprocessing with standard Unix tools, and model training coordination.

#!/bin/bash

# AI Development Automation Script

# This script demonstrates essential Linux commands for ML workflows

set -e # Exit on any error

# Create project directory structure

echo "Setting up project directories..."

mkdir -p ml_project/{data,models,scripts,logs}

cd ml_project

# Download dataset using wget

echo "Downloading dataset..."

wget -O data/raw_data.csv "https://example.com/dataset.csv" || {

echo "Download failed. Check your internet connection."

exit 1

}

# Verify download integrity

echo "Verifying download..."

if [ ! -s data/raw_data.csv ]; then

echo "Error: Downloaded file is empty or missing"

exit 1

fi

# Data preprocessing with standard Linux tools

echo "Preprocessing data..."

# Remove header and filter valid entries

tail -n +2 data/raw_data.csv | grep -v '^$' > data/cleaned_data.csv

# Split data into train/test sets (80/20 split)

total_lines=$(wc -l < data/cleaned_data.csv)

train_lines=$((total_lines * 80 / 100))

head -n $train_lines data/cleaned_data.csv > data/train.csv

tail -n +$((train_lines + 1)) data/cleaned_data.csv > data/test.csv

echo "Data split: $train_lines training samples, $((total_lines - train_lines)) test samples"

# Create a simple training script

cat > scripts/train_model.py << 'EOF'

import sys

import os

import time

def simple_training_simulation():

"""Simulate model training process"""

print("Starting model training...")

# Simulate training epochs

for epoch in range(1, 6):

print(f"Epoch {epoch}/5")

time.sleep(1) # Simulate computation time

# Simulate decreasing loss

loss = 1.0 / epoch

print(f"Loss: {loss:.4f}")

# Create mock model weights file

weights_content = "# Model weights (simulated)\n"

weights_content += "layer1_weights=[0.1, 0.2, 0.3]\n"

weights_content += "layer2_weights=[0.4, 0.5, 0.6]\n"

return weights_content

if __name__ == "__main__":

try:

weights = simple_training_simulation()

# Save model weights

with open("../models/model_weights.txt", "w") as f:

f.write(weights)

print("Training completed successfully!")

print("Model weights saved to models/model_weights.txt")

except Exception as e:

print(f"Training failed: {e}")

sys.exit(1)

EOF

# Run the training script

echo "Starting model training..."

cd scripts

python3 train_model.py 2>&1 | tee ../logs/training.log

cd ..

# Verify model output

if [ -f "models/model_weights.txt" ]; then

echo "Model training completed successfully!"

echo "Model size: $(du -h models/model_weights.txt | cut -f1)"

echo "Training log: $(wc -l < logs/training.log) lines"

else

echo "Error: Model weights not found"

exit 1

fi

# Create archive of the complete project

echo "Creating project archive..."

tar -czf "ml_project_$(date +%Y%m%d_%H%M%S).tar.gz" data models scripts logs

echo "Setup complete! Project archived and ready for deployment."This script showcases several key Linux concepts for ML engineers: directory structure creation with mkdir, reliable file downloads using wget with error handling, data manipulation using tail and grep for preprocessing, file size verification, process logging with tee, and project archiving with tar. The script uses set -e for robust error handling and demonstrates how to coordinate Python training scripts from shell environments. These patterns form the foundation of production ML pipelines on Linux systems.

No comments yet. Be the first to share your thoughts!