Linux and the shift toward AI

Linux is the default home for AI development, so it makes sense that we're finally using those same models to manage the servers they run on. We aren't looking at a total replacement of the terminal, but we are seeing a shift where manual log grepping is becoming a relic of the past.

Several factors make Linux a natural fit for AI workloads. Its open-source nature allows for deep customization and access to the underlying system, something often required for AI model development and deployment. The flexibility of the command line, combined with the server’s dominance in cloud infrastructure, means a huge proportion of AI applications already run on Linux.

Currently, we're seeing the early stages of AI integration in Linux tools. Predictive analytics for system performance are becoming more common, and some security tools are beginning to use machine learning for threat detection. By 2026, I expect to see more sophisticated AI-powered tools for tasks like automated configuration, anomaly detection, and self-healing systems. The focus will be on practical applications that provide tangible benefits, rather than purely experimental features.

Automating Log Analysis with Machine Learning

Log analysis is a traditionally time-consuming task for system administrators. Machine learning offers a way to automate this process, identifying anomalies and potential security threats that might be missed by manual review. ML algorithms can learn to recognize patterns in log data and flag unusual events that could indicate a problem.

Several tools are starting to integrate ML for log analysis. While specific SDK names are hard to pinpoint at this stage, the general approach involves feeding log data into a trained model, which then outputs a risk score or alerts based on its findings. The challenge lies in the quality of the data. Training these models requires labeled datasets, which can be difficult and expensive to create.

Messy logs—full of irrelevant noise and formatting errors—break these models quickly. You'll spend more time cleaning data than training the actual algorithm. Even with a clean model, you still need to be the one to decide if a flagged anomaly is a real breach or just a scheduled cron job acting up.

Predictive Maintenance for Servers

Predictive maintenance aims to anticipate hardware failures and performance bottlenecks before they impact system availability. Machine learning can analyze server metrics – CPU usage, memory consumption, disk I/O, network traffic – to identify patterns that suggest an impending issue. This allows administrators to proactively address problems before they escalate.

Time series analysis and regression algorithms are well-suited for this type of predictive modeling. By analyzing historical data, these algorithms can learn to predict future values and identify deviations from expected behavior. For example, a gradual increase in disk I/O latency might indicate a failing hard drive.

Implementing predictive maintenance often requires custom scripting to collect and process server metrics. The data needs to be collected at regular intervals, stored in a suitable format, and then fed into an ML model. While some monitoring tools offer basic predictive capabilities, a more tailored approach will likely yield better results. It’s about creating a feedback loop where insights from the model inform maintenance schedules and resource allocation.

AI-Driven Security Automation

AI can significantly enhance Linux security by automating tasks like intrusion detection, malware analysis, and vulnerability scanning. Machine learning algorithms can be trained to identify malicious activity based on patterns in network traffic, system calls, and file system changes.

Blocking threats in real-time is the goal. A model can recognize a malware family's signature and quarantine a file before it executes. But attackers change their methods every week, so a model trained last month is already becoming a liability. You have to retrain these systems constantly or they'll miss the latest exploits.

Currently, some Linux security tools are incorporating AI features, but they are mostly focused on anomaly detection and threat intelligence. I'm cautious about relying solely on AI for security; human oversight is essential to validate alerts and prevent false positives. A layered security approach, combining AI with traditional security measures, is the most effective strategy.

- Intrusion detection for spotting weird network spikes

- Malware Analysis: Detecting and classifying malicious software.

- Vulnerability Scanning: Identifying security weaknesses in the system.

AI-assisted configuration

AI could potentially automate tasks like system configuration and optimization, suggesting optimal settings for kernel parameters, file system configurations, or network settings. The idea is that AI could learn from system behavior and automatically adjust configurations to improve performance and reliability.

This is a more speculative area, but it’s based on the principle of reinforcement learning, where an AI agent learns to optimize a system by trial and error. The agent would experiment with different configurations, monitor the results, and then adjust the configurations to achieve the desired outcome. This requires a robust testing environment and careful monitoring to avoid unintended consequences.

The practicality of this approach depends on the complexity of the system and the availability of data. It’s likely that AI-assisted configuration will initially be limited to specific areas, such as network tuning or storage optimization. But as AI technology advances, it could become a more widespread tool for system administrators.

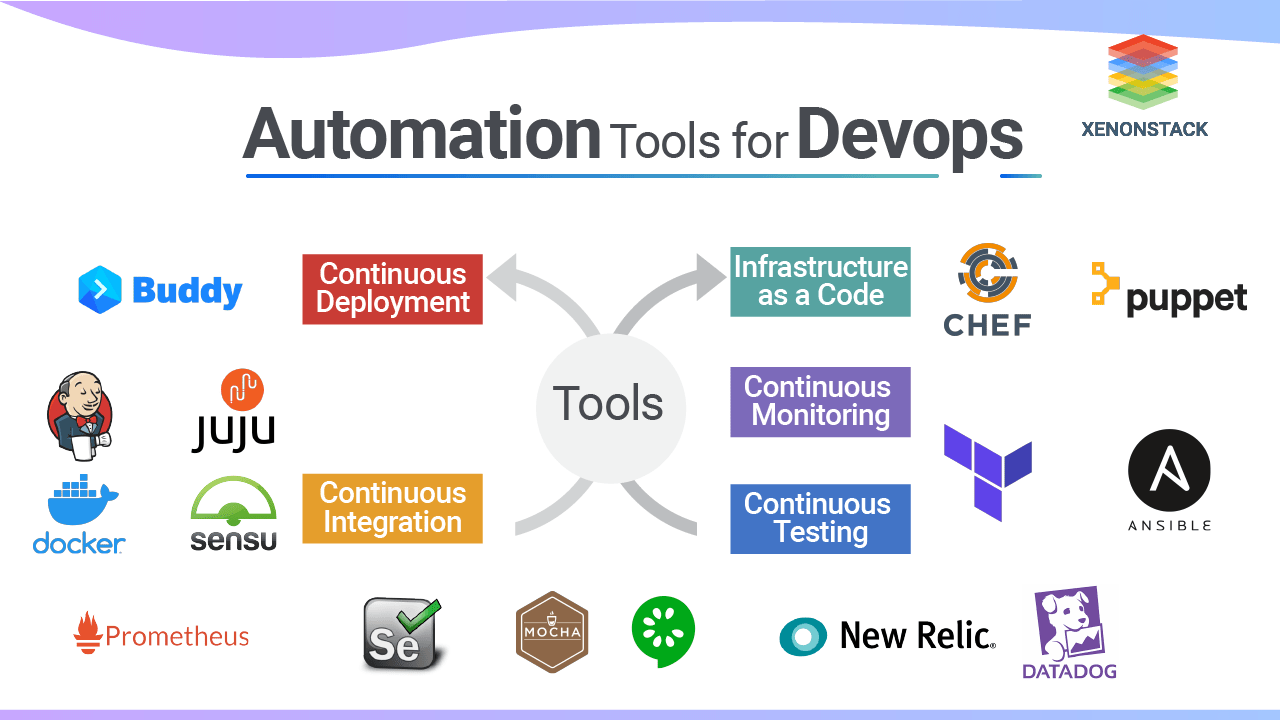

Tools and Frameworks to Watch

Several tools and frameworks are showing promise in the area of AI-powered Linux automation. Ingram Micro’s AI Line Card highlights vendors like Aisera, AMD, AWS, and IBM, all offering AI solutions that could integrate with Linux environments. However, direct integration details are often proprietary.

GitHub’s Zamanhuseyinli/Linux-AI project demonstrates efforts to build AI kernel tools and automation directly into the Linux ecosystem. While still in early development, it showcases the potential for open-source AI solutions for Linux. Red Hat Enterprise Linux is also increasingly focused on AI, with documentation outlining AI capabilities within the platform.

Beyond these, general-purpose machine learning frameworks like TensorFlow and PyTorch can be used to build custom AI solutions for Linux. These frameworks provide the tools and libraries needed to develop, train, and deploy ML models. The key is to find tools that are well-documented, actively maintained, and compatible with your existing infrastructure.

- Aisera: Offers AI-powered automation for IT operations.

- AWS: Provides a range of AI services that can be integrated with Linux.

- IBM: Offers AI solutions for system management and automation.

- Zamanhuseyinli/Linux-AI, an open-source attempt at kernel-level AI tools

Real-world friction and risks

Adopting AI-powered automation in Linux system administration isn't without its challenges. Data privacy is a major concern, especially when dealing with sensitive system logs or user data. It’s important to ensure that AI models are trained on anonymized data and that data is handled in compliance with relevant regulations.

Security risks are also a factor. AI models themselves can be vulnerable to attack, and malicious actors could potentially exploit these vulnerabilities to compromise the system. The need for skilled personnel is another hurdle. Implementing and maintaining AI-powered automation requires expertise in machine learning, scripting, and system administration.

Finally, it’s important to be aware of the potential for bias in AI models. If the data used to train the model is biased, the model may perpetuate those biases in its predictions. Human oversight is crucial to identify and mitigate these risks. AI is a powerful tool, but it's not a replacement for human judgment.

- Data Privacy: Protecting sensitive system and user data.

- Security Risks: Protecting AI models from attack.

- Skill Gap: Needing personnel with expertise in AI and system administration.

- Bias in AI Models: Ensuring fairness and accuracy in predictions.

AI Tools for Linux System Administration - Comparative Assessment (2026)

| Tool/Framework | Ease of Use | Linux Integration | Scalability | Community Support |

|---|---|---|---|---|

| Aisera | Medium - Requires training data | Good - Designed for IT operations | High - Enterprise focused | Medium - Growing, primarily enterprise users |

| AMD AI Engine | Medium - Developer focused | Potential - Dependent on AMD hardware adoption | Medium - Tied to AMD infrastructure | Medium - Active developer community |

| AWS SageMaker | Medium - Broad feature set, learning curve | Good - Excellent Linux support via EC2 and containers | Very High - Designed for large-scale deployments | High - Large and active community |

| Cisco AI Networking | Medium - Network-centric focus | Good - Strong integration with Cisco network devices on Linux | Medium - Scalable within Cisco ecosystem | Medium - Cisco-focused community |

| Google AI Platform | Medium - Requires familiarity with Google Cloud | Good - Strong support for Linux-based deployments | Very High - Leverages Google's infrastructure | High - Large and active community |

| HPE Machine Learning Development Environment | Medium - Targeted at data scientists | Good - Optimized for HPE hardware and Linux | Medium - Scalable within HPE infrastructure | Medium - HPE-focused community |

| IBM Watson | Medium - Complex, requires specialized skills | Good - Supports Linux environments | High - Designed for enterprise-level scale | Medium - Established, but focused on enterprise solutions |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

No comments yet. Be the first to share your thoughts!